Artificial intelligence (AI) is reshaping the very fabric of human interactions with digital products. With a skyrocketing AI software market, everyone — be it a CEO, a product owner, or a junior designer — needs to get on board with using AI in UX.

So how about we dive into the AI principles in UX design together? You will learn more about key AI advancements, their impact on UX, and the power they hold to push digital products further. All of it is served with real-world examples — both as implemented AI in a final product and as AI tools used to speed up the design process.

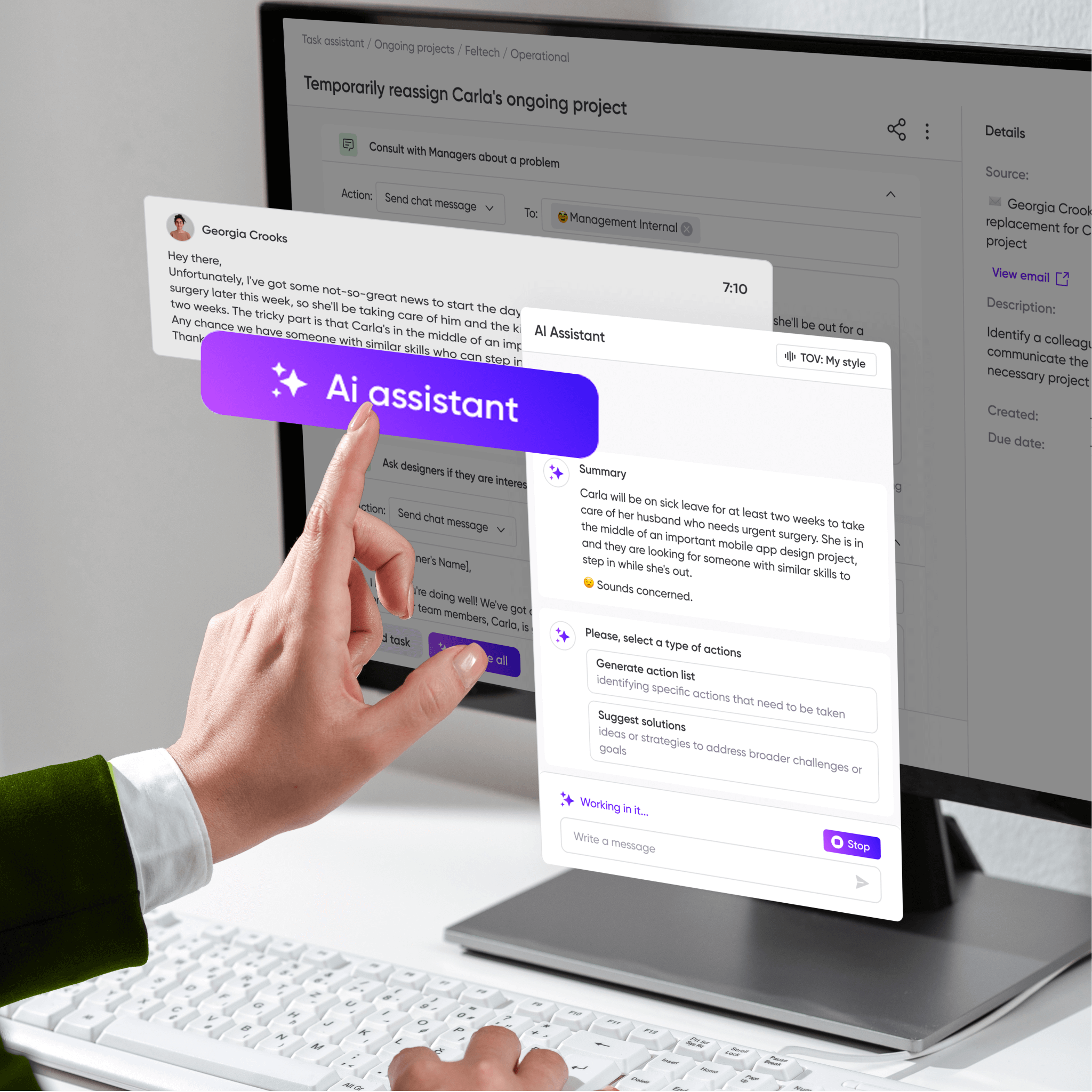

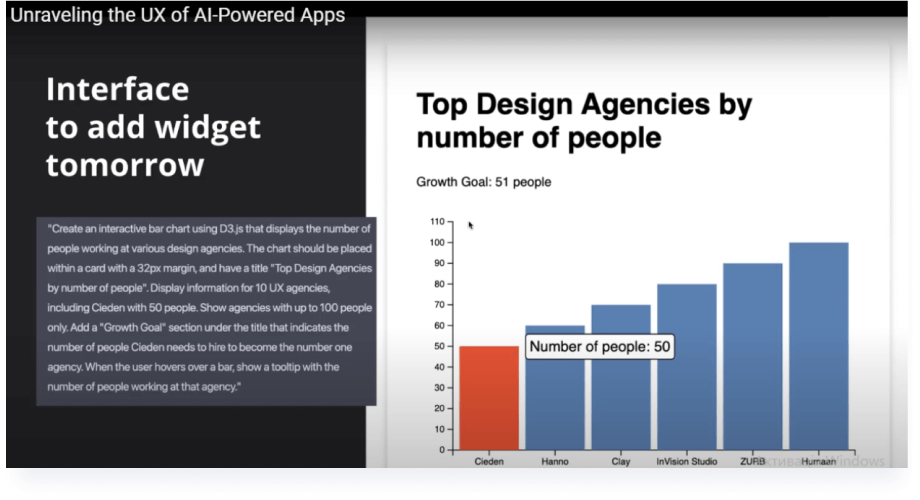

This guide is a summary of Cieden’s observations of AI technology in the past years. Not only have we observed; we’ve actively used our knowledge to implement AI in design.

Now grab your coffee, and let’s explore the future of UX with AI technology.

How far along are companies with AI?

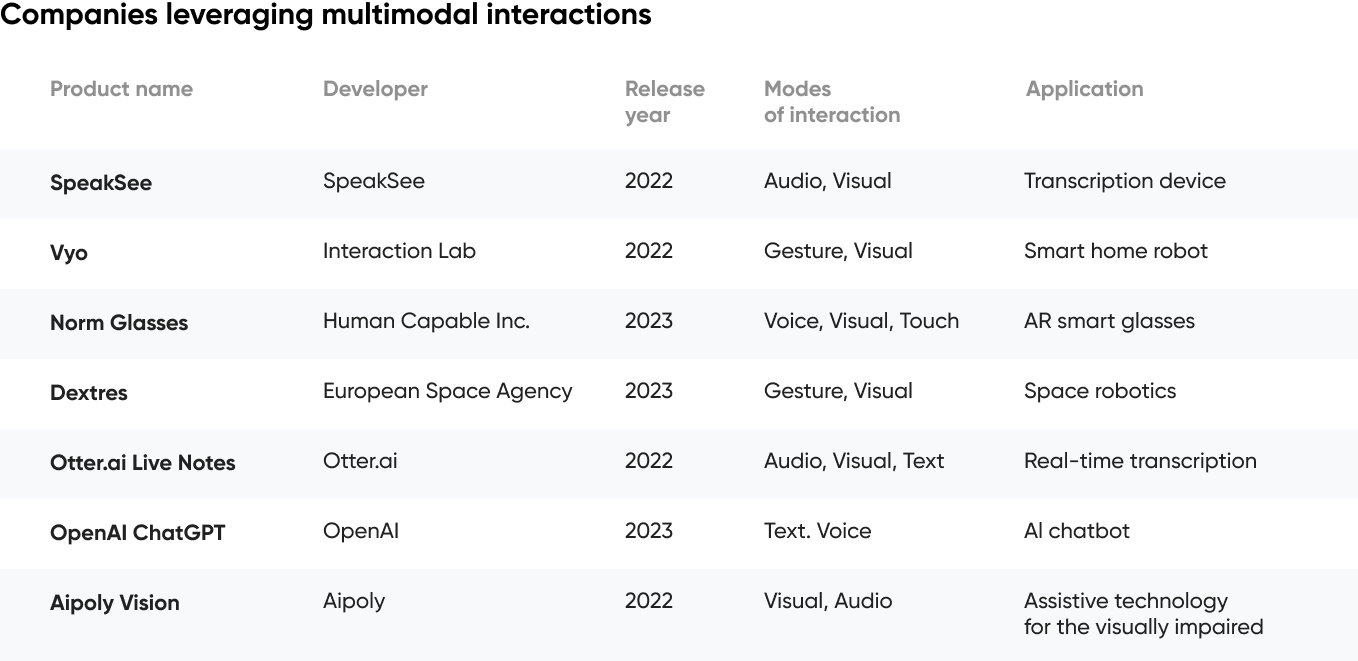

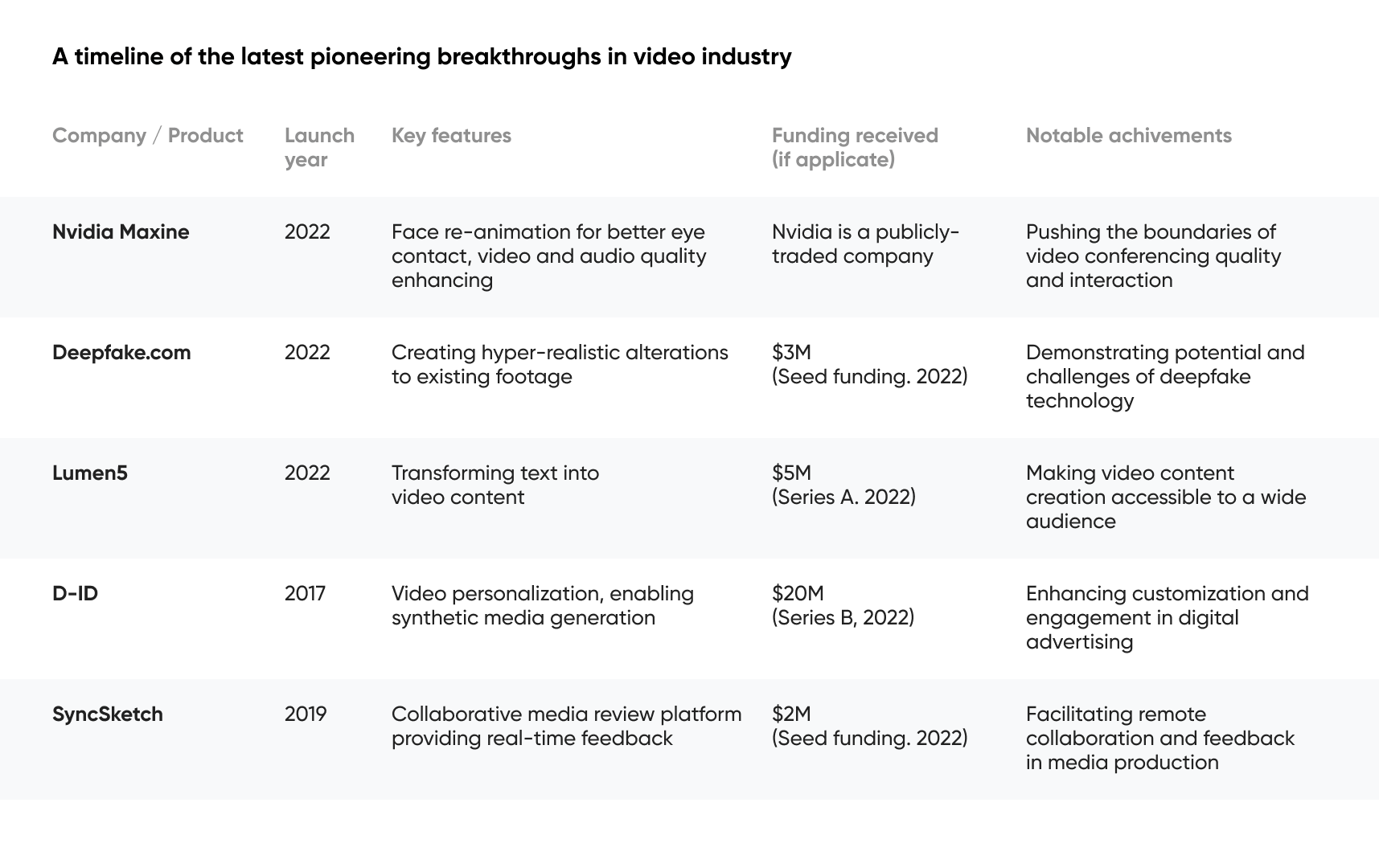

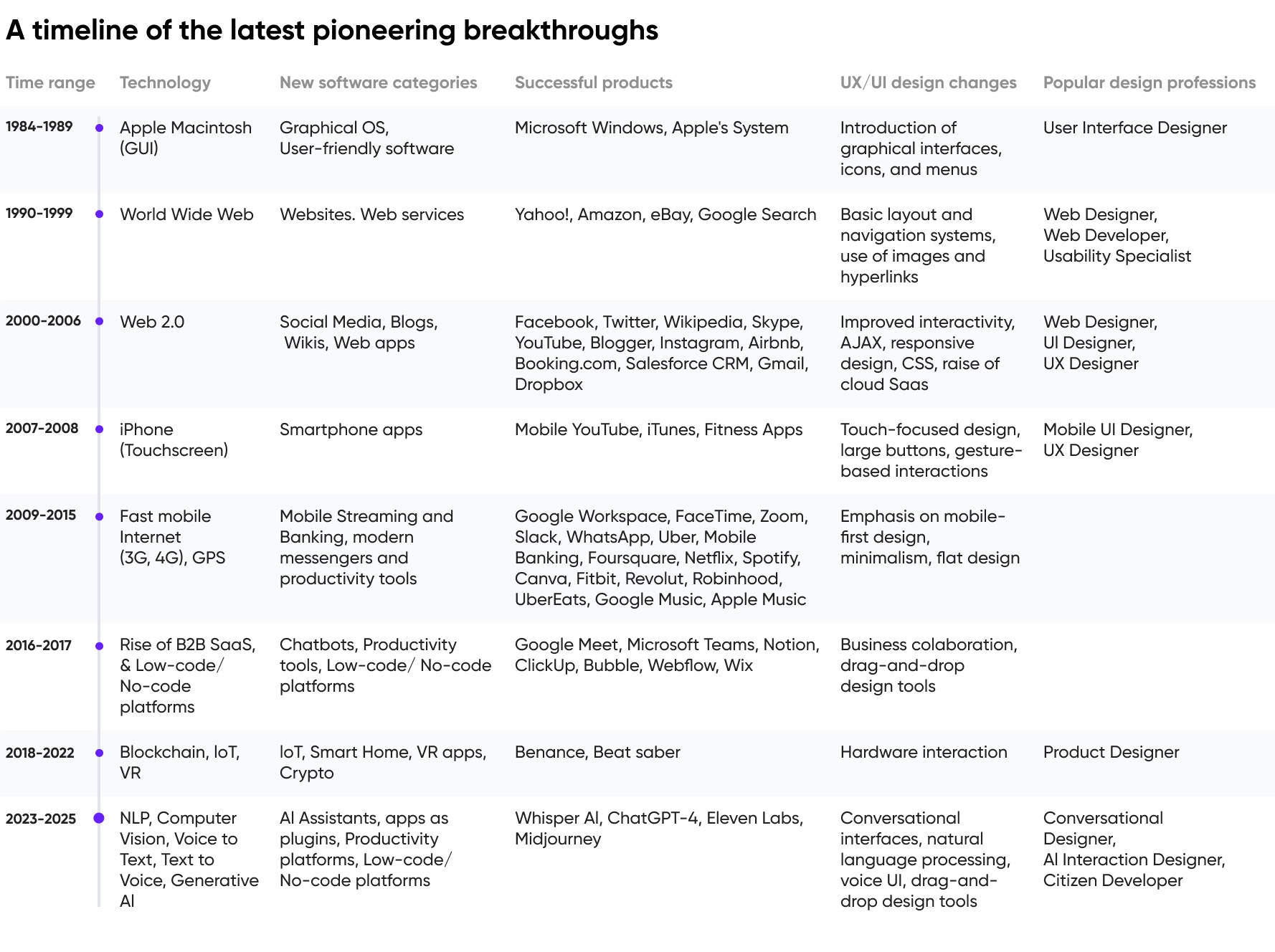

Over the years, the history of tech advancements within the digital landscape has rapidly progressed. Top companies have embraced technology in diverse ways.

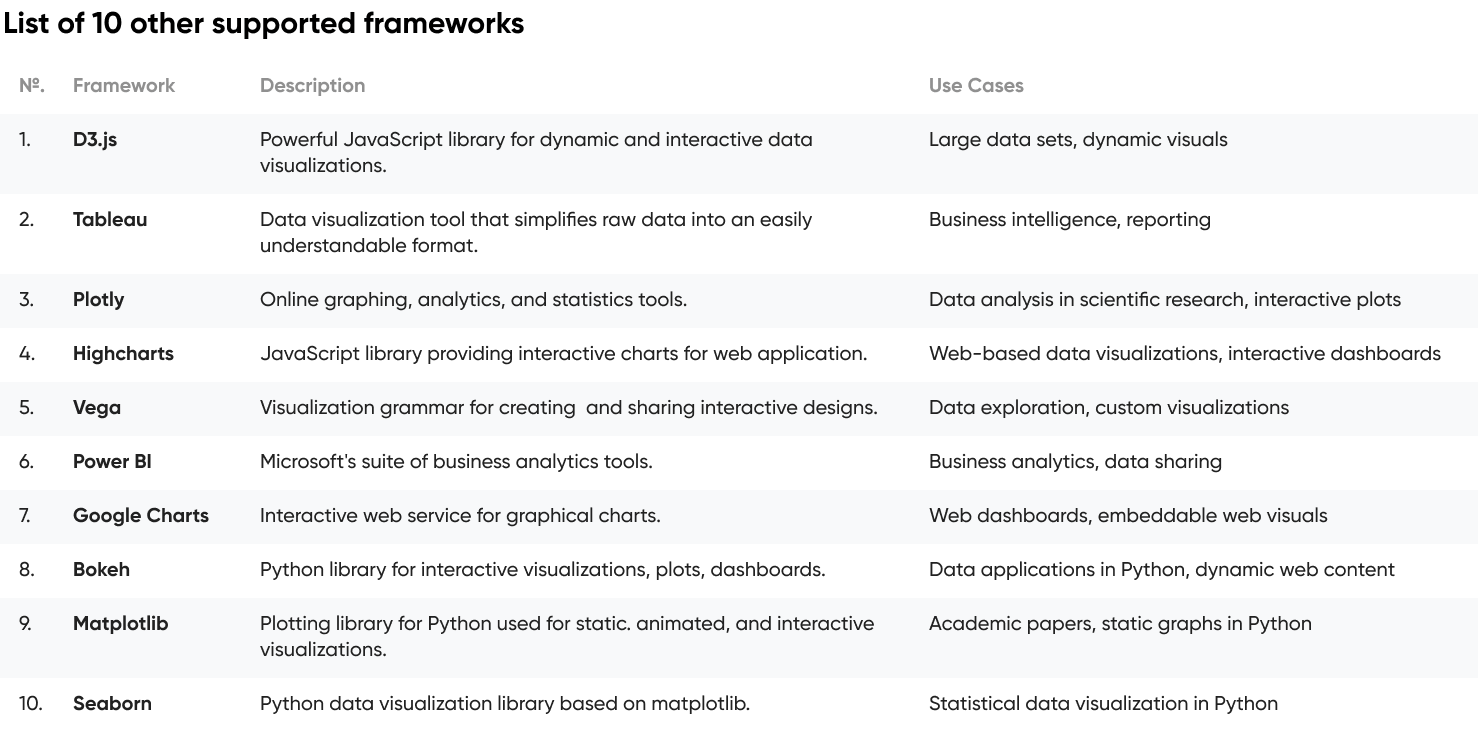

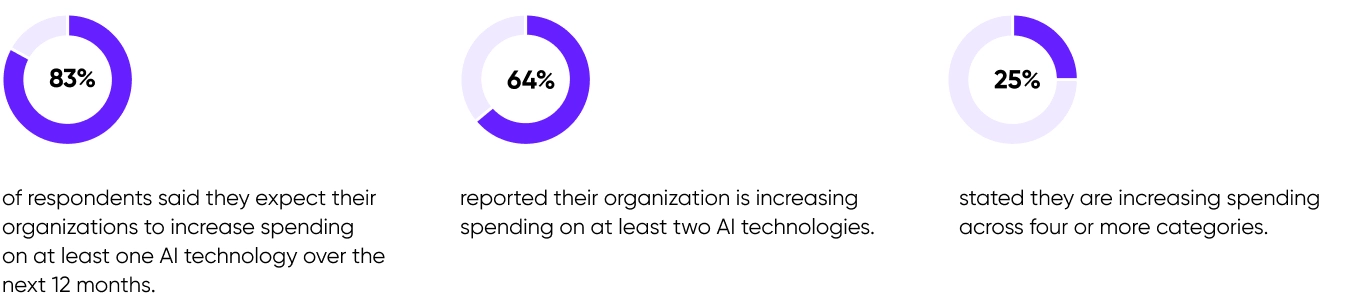

This journey through various technological epochs, from the early days of the internet to the rise of cloud computing, has paved the way for the next significant leap in business technology: the integration of artificial intelligence. Based on a May 2023 Forrester Research survey, businesses eager to integrate AI are spreading their investments across various tech niches. The survey, polling 1,981 global data and analytics leaders, reveals machine learning platforms (77%), machine vision (76%), and AutoML (76%) as the primary investment hotspots.

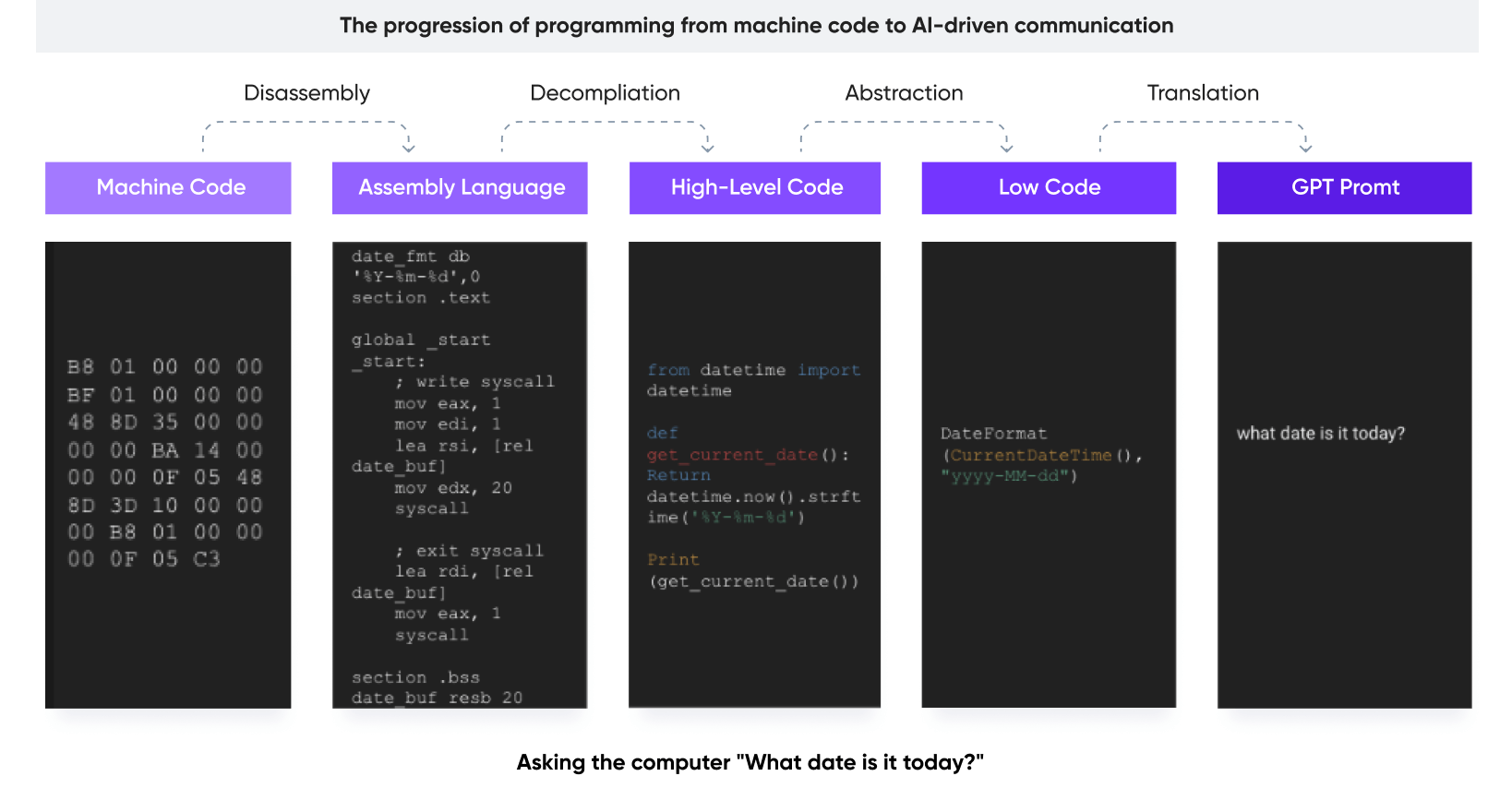

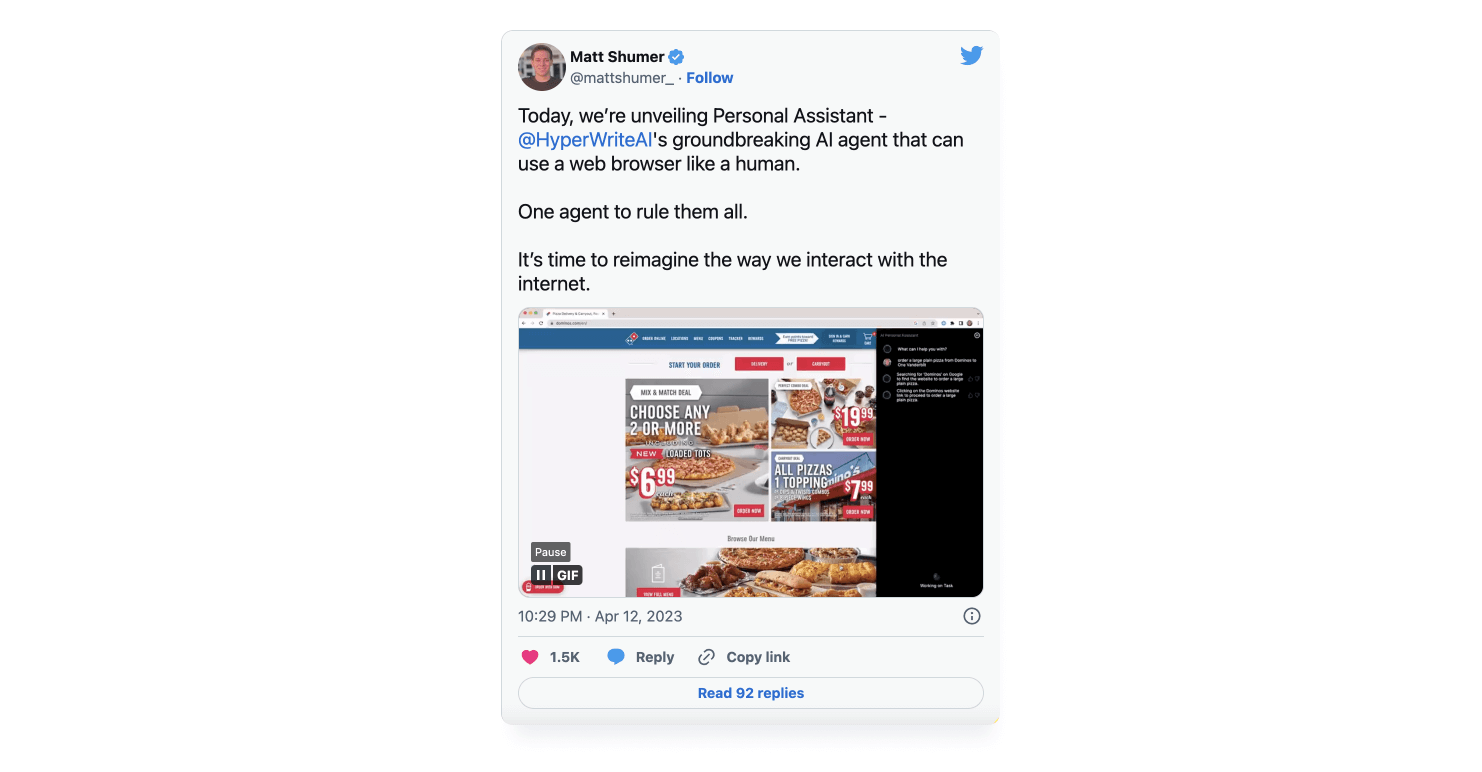

Despite going through multiple AI breakthroughs over the last four decades, the journey is not complete. Businesses continue investing in tech niches like machine learning (ML) changing the way humans interact with computers. Next up, learn how AI is used to redefine user interaction in real-life digital products.